An Introduction to Spectrum Sharing

The Current Scenario – Wi-Fi, GSM and CDMA: A Primer from the Perspective of Spectrum Coordination

Sharing spectrum is not a radically new idea: it's probably being shared in many places in your living room. Your family's phones could be communicating with your laptops using Bluetooth; your Wi-Fi router is sharing Wi-Fi spectrum with your next door neighbor's. There is no central brain that tells each device how to share spectrum, but each device pair (phone+laptop, for example) has some unique identifier (a code) that enables them to hear each other over the “noise” created by the other devices, as though they were speaking different languages. Each device can access the same frequencies at the same time and place, but does not know in advance which other devices are going to use them, and as long as there aren't too many such devices close to each other, the scheme works well.

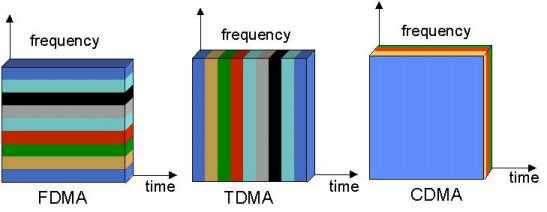

From a technological standpoint, this is one of two kinds of spectrum coordination that's currently in wide use: the second is where each device is given a narrow sliver of frequency to itself for a specified period of time.[1] This is what happens with GSM cellphone technology: the service provider's tower allocates frequency — from the pool of frequencies available — to users on a per-call basis: this is called Frequency Division Multiple Access, or FDMA. GSM further divides access between different users in the same frequency channel in the time domain with bursts of data of the order of milliseconds, something called Time Division Multiple Access or TDMA; you'd be sharing your frequency channel with up to seven other people[2] and your content would be sent in sub-millisecond bursts approximately every five milliseconds.

Code Division Multiplexing, or CDMA, Is concept that assigns a user a 'code' for the duration of her call that effectively makes interference from other users, with other codes, appear as noise. The following picture illustrates FDMA, TDMA and CDMA:[3]

|

|---|

The preceding discussion would suffice for a single cell tower, alone in a desert. In the real world, there's more than one tower, so we'll have to create a system so that no two adjacent towers end up allocating the same frequency at the same time. The simplest way to do that, and the only one currently used, is splitting the available spectrum such that the range of frequencies available to a tower does not intersect with that available to any of its neighbors, ever – that way, a tower can only allocate from its own set of frequencies, but it need not concern itself with what its neighbors are doing. If adjacent towers were to share spectrum, then the preceding condition only needs to apply at that exact moment in time – at that precise instant a tower should be aware of the frequencies being used by all towers that are close enough to interfere with it, and pick a frequency outside that set, which it can use for the duration of a call.

Frequency Reuse

When there weren't so many cellphones crowding up the spectrum, it did not make economic sense to invest in the extra infrastructure required to make neighboring towers 'talk' to each other with low latency, so the solution we have now, even within the towers of a single service provider, is that any tower's neighbors do not intrude upon the spectrum assigned to that particular tower — what a neighbour is in this statement is qualified below. To start with, let's look at how towers could ideally be placed. We want to place towers on the ground in some regular pattern that makes them end up equidistant from each other: there are as many ways of doing that as there are of tiling a plane, which you can think of as tiling a bathroom with regular shapes (called 'regular polygons' by the pedantic).

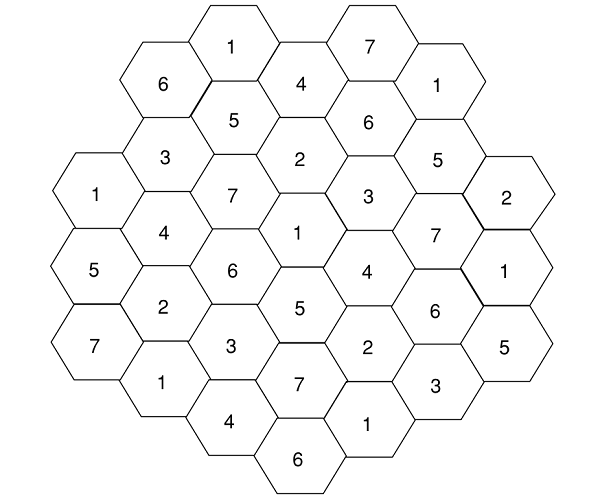

Starting from the simplest, we can do it with tiles shaped like triangles, squares or hexagons, and a little thought will convince you that these are the only choices. Since a tower's signal would be 'strong enough' only up to some maximum radius, we'd ideally like to tile our plane with circles, but if we settle for the next best thing, the closest shape to a circle with which to tile the plane is a hexagon, in a honeycomb pattern; if you're looking at it from above, the towers would be placed as in the diagram below.[4]

|

|---|

This is just a part of a much larger honeycomb on the ground; the towers go in the center of the hexagons, where the numbers are; why the numbers are as they are will become clear in a couple of lines. Let's focus at tower 1 in the center of the diagram for our example. If the signals decay slow enough — so that the signals radiated from the nearest neighbors (towers surrounding 1, i.e. 2 through 7) and the next-nearest neighbors (towers two steps away from 1, with numbers from 2 through 7), interfere significantly with tower 1 in the center, but the next-to-next-nearest neighbors (three steps away from 1) do not, then the frequency reuse pattern can be like what we see in the diagram above, with towers denoted by the same number (and only the same number) using same exclusive set of frequencies. In this example, the closest towers with the same frequency as the central tower are the 1's in the hexagons at the edge – the frequency reuse factor is 3 (see footnote). In this diagram, the ordering of the numbers makes no difference – the situation would be the same if we exchanged the position of every, say, 1 and 3.

In reality the grid of towers of a particular operator covering a city is rarely hexagonal, due to local constraints, so what needs to be taken care of is not to use the frequencies that the nearest neighbors, next-nearest neighbors and so on are using depending upon the frequency reuse factor.[5] It's clear that without the towers being able to communicate in near-real time, with and FDMA/TDMA system like GSM, this is the optimal — and, in fact, the only — way to go.

Neighbouring towers sharing spectrum

Everything changes, though, if the towers can communicate and coordinate fast enough — in theory, at least, all the service provider's towers could pick spectrum from a common pool.[6] In fact, every service providers could put their spectrum into a common pool from which frequencies can be allocated to users as before. This would increase trunking efficiency and thereby the maximum number of users per tower dictated by quality of service limits (both terms are defined in the next section), making more efficient use of the spectrum.

The Current Trade-off between Trunking and 'Economic' Efficiency: The Principal Argument for (Inter-operator) Shared Spectrum

Imagine the following scenario:

- We have 5 MHz of spectrum split it into five channels of one MHz each;

- Five thousand people own cell phones and each is assigned a channel so that there are a thousand cellphone users per channel;

- People call infrequently: calls are randomly distributed but on average, in each channel, five people attempt to make a call every minute and each call is ten seconds long.

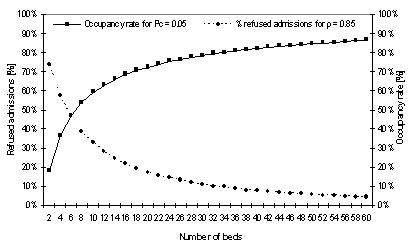

In this way, a lot of people can use a few channels with a reasonable hope that their calls will be connected, a phenomenon called 'trunking'. Chances are high, however, that at least one person's going to make a call before the previous caller on her channel is done, and end up being blocked. The probability that a call will go through is factored into the Quality of Service (QoS) through the Erlang B Formula; roughly speaking, the less chance there is of a caller being blocked, the higher your QoS. It's essentially a question of queuing: the same logic can be applied to beds at a hospital. The number of hospital beds in a town would be much fewer than the number of people, but it works because everyone's not sick all the time; if people are sick more often, or for longer durations, the chances that someone won't get a bed would be higher:[7]

|

|---|

Suppose someone own an Airtel phone and Airtel's channels are all in use, but Vodaphone has a channel free at the time. Let's look at two alternatives:

a) she's not allowed to switch, and cannot make her call;

b) she's allowed to switch to the empty channel, and her call goes through.

Clearly, the second choice is better — and it has greater trunking efficiency.

In the current scenario, service providers get exclusive rights to chunks of spectrum. Naively, the more competitors (in this case, service providers like Airtel and Vodaphone) you have in a market, the better the competition. This, unfortunately, leads to a decrease in trunking efficiency — it's inversely proportional to the number of players in the market because every chunk of frequencies split between two service providers (every successive split) increases the chances for an event such as the one described above happening. The question that logically follows is: what is the optimal number of service providers for the Indian market? This is hard to find, and differs depending on who you ask — incumbents, for instance, may quote a smaller number, whereas prospective new entrants may quote a larger one. The number is controversial within policy-making circles as well, and is being debated as this article is being written. We note in passing that the number of competitors — and thus fragmentation of spectrum — is higher in the Indian market than most others.

If spectrum were shared, however, all this would be moot. This, therefore, is the primary argument towards spectrum sharing: better trunking efficiency as well as more competition — you can , in this instance, have it both ways.

CDMA and Spectrum Sharing

GSM is a simple example, where both the difficulty and the benefits of intra-operator spectrum sharing are readily apparent. Things get more difficult conceptually if we talk about newer technologies, so we'll have to get a little deeper into the technicalities. Code Division Multiple Access, or CDMA, allows phones to communicate using the same frequencies at the same time and place, but differentiated by codes — similar to WiFi but using different encoding schemes and technology. CDMA might look (from the analogy with Wifi) to require no central planning, but quality of service guarantees require that various phones in a 'cell' coordinate, and the coordinating agent happens to be that cell's tower. Two things need to happen: one, the code allocated to each phone needs to be sufficiently different,[8] at least with respect to other nearby phones, which means the tower has to allocate codes. Additionally, the distance involved between cellphone and tower (as against laptop and router) causes the near-far problem.[9]

For synchronous CDMA, the concept analogous to frequency reuse is code reuse — a tower needs to take into account the codes being used by its nearest neighbors, next-nearest neighbors and so on, which might be easier than coordinating timing in a TDMA system. For asynchronous CDMA (the most commonly used variant), even that is not required — the low cross-correlation pseudorandom codes that are used have so many possibilities that the likelihood of a collision would be small, though other users would appear as gaussian noise, so just like GSM, the number of users is limited by QoS limits. This makes intro-operator sharing of spectrum between adjacent towers easier and asynchronous CDMA ends up with a frequency reuse factor of 1, meaning that a tower can access the same set of frequencies as its (intra-operator) neighbor, hypothetically making it easier to use in a shared-spectrum system.

LTE

LTE uses Orthogonal Frequency Division Multiplexing, or OFDM, which can be – very roughly — thought of as combining ideas used in FDMA as well as CDMA, in that information is redundantly split between several frequencies ('subcarriers' in the literature) and each frequency can have more than one channel, using an orthogonal coding schemes like (synchronous) CDMA, where, as mentioned earlier, a mobile phone can distinguish its channel by its code. As it's an FDMA system, the benefits of frequency sharing for LTE can be inferred as above for GSM.

The Regulatory Perspective

The European Commission has this to say about shared spectrum:[10] “From a regulatory point of view, band sharing can be achieved in two ways: either by the Collective Use of Spectrum (CUS), allowing spectrum to be used by more than one user simultaneously without a license; or using Licensed Shared Access (LSA), under which users have individual rights to access a shared spectrum band”.

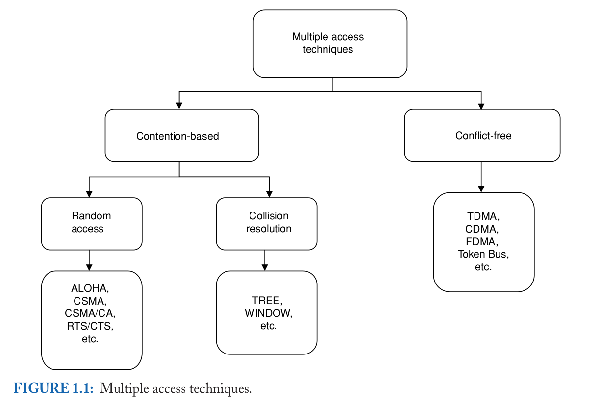

CUS is how unlicensed spectrum like Wi-Fi is currently used, which does not require a central 'brain' allocating spectrum to users. It requires no setup or organization before or during use. LSA is what shared spectrum would have to be like when used by service providers: it requires setup and organization but could offer better efficiency and quality of service because the central 'brain' — in this case the CPU at the cellphone tower — can figure out the most efficient way to allocate spectrum to users, just like a city's traffic lights coordinate the flow of traffic to prevent jams, and for that multiple towers — or multiple transmitters on a single tower — would have to coordinate somehow. In other words, you don't require approval before setting up your Wi-Fi router in your living room, but (depending upon the router, how many neighbors have routers, how close they are, and how far you are from your router) your connection might get dropped; this kind of thing is okay because there usually aren't that many people with routers living that close to each other, though that's fast changing. The 2.4 GHz Wi-Fi band is further crowded in by other microwave radio technologies, like Bluetooth and microwave ovens. Cellphones are a different thing altogether, because you wouldn't want your cellphone to stop working in the middle of a crowded bus if you're late en route to meeting someone at a coffee shop, or if you're being mugged and need to call the police. Therefore it is the service providers' and regulatory agencies' responsibility to provide a high (minimum) quality of service. This classification is symbolized by the following diagram:[11]

|

|---|

CUS falls on the left, being contention-based – that is, different user devices (eg, laptops) could contend with each other for the attention of the base station (eg, Wi-Fi router — random access, CSMA), whereas LSA is conflict-free (which would be the case if the router decides, period). The potential for conflict exists in CUS, there being multiple devices asking for spectrum, whereas for LSA, a central authority decides which device to allocate spectrum to at any particular point in space and time. CUS isn't total chaos, however: it would now be appropriate — taking a leaf from ex-FCC chief technology officer Jon M. Peha – to introduce the concepts of coexistence and etiquette.

In our Wi-Fi example, the Wi-Fi routers merely coexist, and the technological standard allows them to try and use the codes/spectral bands that are in their best interests, to best communicate with their client devices (though actual Wi-Fi routers also follow some sort of etiquette with other routers). One could additionally introduce some sort of etiquette into the equation by requiring that one router should, for example, “wait in the cue” for another — and vice versa — as and when required, as well as other requirements for cooperation depending upon the technology used. This minimal cooperation would be enough for them to, in Mr Peha's words, “greatly improve efficiency if and only if designed appropriately for the applications in the band” - depending upon the technology used, being too 'polite' could cause longer wait times that decrease efficiency. The situation is complicated by the existence of multiple technologies at the same spot – for example, your Bluetooth receiver, two-way radio and Wifi router working in the same room. If there is potential for interference, common communication protocols could be implemented to enable all those devices to 'talk' to each other and effectively follow some form of wireless etiquette so that they can cooperate and not get in each others way. This is all the more important as Wi-Fi will become an essential part of the cellphone communication network for 4G.

To conclude, there are many ways shared spectrum technology could hypothetically work, and in practice the core technologies that are used would dictate the details of the spectrum sharing solution. Spectrum sharing would reduce the regulatory conundrum that is spectrum allocation, and make more efficient use of spectrum — most obviously through trunking efficiency, though there may be other technological benefits depending upon the core technology used. For maximum efficiency and robustness, there would have to be some kind of rules followed, so that devices apply for spectrum like people in a cafeteria queue as opposed to the scrum you might find trying to get into an Indian bus; the etiquette we were talking about earlier should be baked into the design of the communication infrastructure. Some services (like voice calling) by their nature, need a guaranteed high QoS — need to be conflict-free — and therefore need Licensed Shared Access. Others need a minimum of regulation — but with the movement of what used to be CUS-appropriate devices (In many plans for 4G LTE-Advanced, specifically Wi-Fi) towards LSA-appropriate applications, a careful optimization needs to be done in deciding where to draw the line.

The Big Question: Infrastructure Sharing

We've gone through a thought experiment on intra- and inter-operator sharing of spectrum for the particular case of mobile towers in adjacent cells, and come to the general conclusion that the solution is in principle a question of fast and efficient coordination between the geographically separated towers, toward which there are two driving forces at present: the demand for more efficient use of spectrum by a growing body of users with growing data needs, and the supply of low latency, cheaper and higher bandwidth communication options using fiber-optic cables.

There are essentially two parts to the big question we're going to ask: one, what happens when there are multiple operators serving the same geographical area, and two, is it necessary to have multiple towers standing right next to each other for multiple operators?

|

To answer the first question, one could have a 'roaming' agreement between multiple operators at the same spot: if all the channels of one operator are busy, the user just has to switch to a channel of an operator which isn't. For the second, a single tower (the physical tower structure as well as the transmitting equipment on it) could serve any operator, who could rent it's usage on a per-call basis. That, in fact, already seems to be the case: Airtel and Vodaphone, for instance, each own a 42% share in India's largest tower corporation Indus Towers, the remaining 16% belonging to Idea Cellular. |

|

|---|

Infrastructure sharing will be explored further in a forthcoming post.

Coarse-grained Spectrum Sharing

For completeness, we should point out that there are more course-grained (simpler but less efficient) means of sharing in time as well as geography: the appropriate thought experiment is to imagine a radio station at the base of a hill that only has two shows, one for breakfast and one for dinner. Using its radio spectrum on the other side of that hill, or beyond the area it serves, would be fine at anytime; using it's spectrum in between the morning and evening shows would be fine anywhere.

Caveats

It must be emphasized at this point that the above is a purely hypothetical scenario, and not a prescription. Getting this to work would involve technical hurdles that a brief overview such as the one above could not bring up, that could only be discovered in the process of bringing the technology to market. Each technological solution – GSM, CDMA and LTE – would present its own difficulties, which may become apparent only when the product is shipped, so to speak. Fine technical judgments would need to be made: an example of the difficulty involved could be gauged from the early debates comparing the first CDMA standard (IS-95) with GSM at the time.

The economic model to use for shared spectrum and shared infrastructure is also something under intense discussion right now, and a number of scholarly papers have already been written up.

[1]. This is what you'd get in your first few Google search results when you look for “shared spectrum”, because the former has become so widely accepted that it's now part of the linguistic background.

[2]. Explained on http://www.radioraiders.com/gsm-frequency.html, referring to 3GPP spec http://www.3gpp.org/ftp/Specs/latest/Rel-7/45_series/45005-7d0.zip

[3]. From http://www.umtsworld.com/technology/cdmabasics.htm

[4]. From Mike Buehrer, William Tranter-Code Division Multiple Access (CDMA)-Morgan & Claypool Publishers (2006).

[5]. There are multiple definitions; the simplest one is “how many steps (in cells) that you have to walk from the tower before you can reuse the frequency”, which will suffice for us.

[6]. Of course, it's going to be messier in practices.

[7]. From http://www.vumc.com/branch/PICA/Software/

[8]. Orthogonal for synchronous CDMA, or 'sufficiently' orthogonal for asynchronous CDMA

[9]. Remember that the receiver on the tower has to demux (split) the signals received from many cellphones, and while a Wifi router would perhaps service multiple laptops in the same building, a CDMA tower has to work for a couple of hundred phones at varying distances – some a building-length away and some, many kilometers away. Every receiver has its own maximum signal to noise ratio, where the strength of the signal received has to be more that a certain fraction (which can be quite small, for a good receiver) of the strength of the electromagnetic (radio) noise it receives from other sources; cellphone towers have to deal with much larger signal to noise ratios than Wifi routers. For an FDMA or TDMA system, different users' data arrives at different frequency or time-slots, so as long as those slots are properly differentiated, one user's signal won't be another user's noise. For the commonly used asynchronous CDMA system, however, this is not the case, so at a receiver on a tower, the signal transmitted by a distant cellphone could be swamped by that from a much closer phone. The way this is dealt with is to have phones closer to the tower decrease their transmission power. So even in CDMA, the tower is still telling the phone what to change, only in this case it's the transmission power as opposed to the exact frequency and time.

[10]. http://ec.europa.eu/digital-agenda/en/promoting-shared-use-europes-radio-spectrum

[11]. From Mike Buehrer, William Tranter-Code Division Multiple Access (CDMA)-Morgan & Claypool Publishers (2006)